Integrating machine learning to large-scale physical simulations

Machine learning (ML) and data-driven techniques have been steadily gaining popularity thanks to their ability to closely approximate functions at a fraction of the time required by traditional numerical methods. Theoretical results show that Artificial Neural Networks can approximate any function with arbitrary precision, and the research literature is full of examples of successful applications of ML techniques to a wide range of problems. It only makes sense to investigate when and how to enable such techniques for large-scale simulations in general, and how to do that in a standardised way. We are developing SiMLInt, an interface that would allow users to use ML models in their simulations as easily as possible.

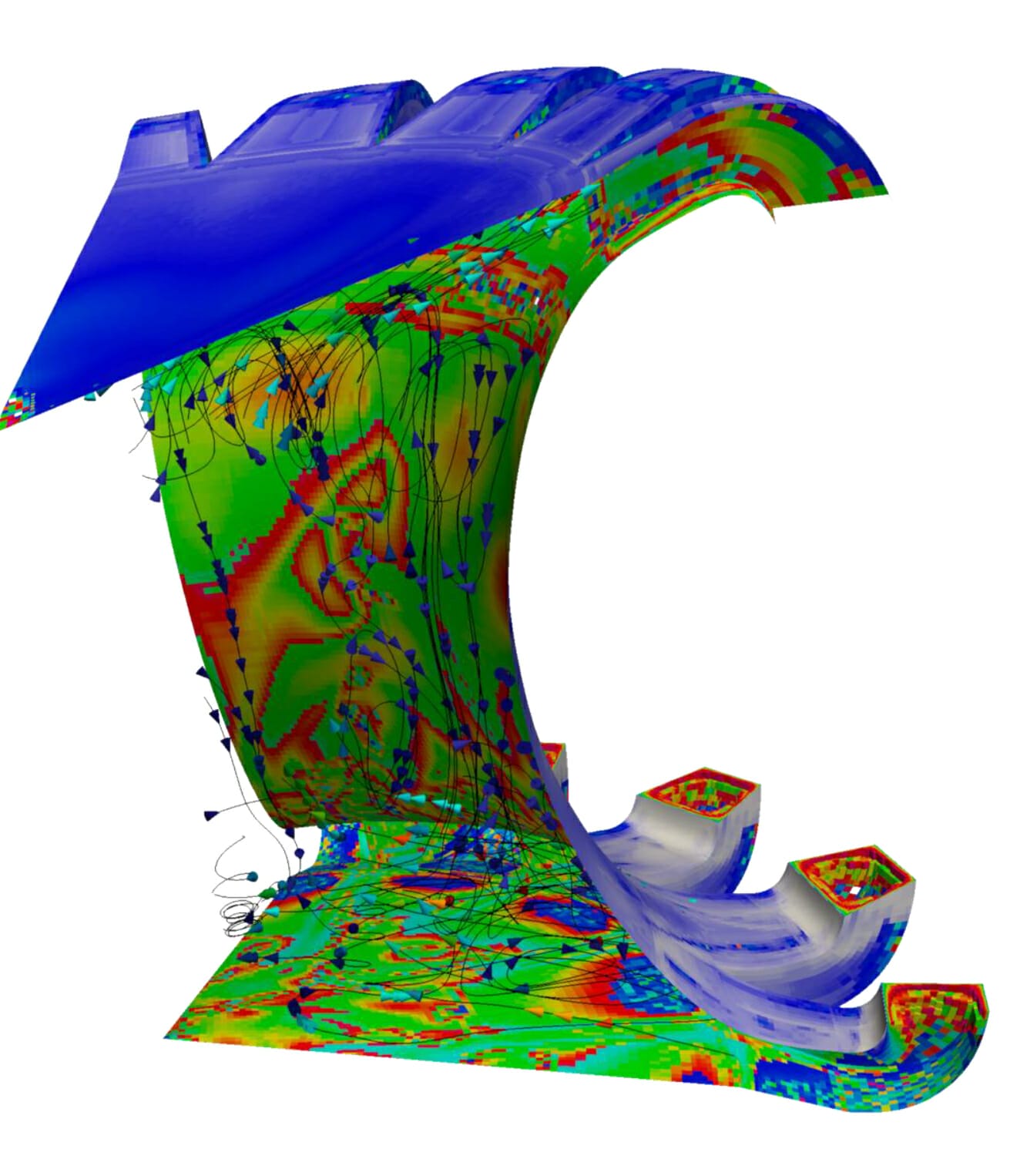

Large and complex physical systems are often simulated by breaking up the domain to smaller parts and resolving each of those numerically. Often, to get a solution with a desired precision, the break-down needs to be very fine; in order to simulate a system over a period of time one might need to solve billions of equations. Fortunately, decomposing the simulation domain usually results in good speed-up by parallelising the numerical computations. Machine learning inference, on the other hand, typically does not require parallelisation as the process is very fast. SiMLInt is designed so that the use of ML models in a parallel simulation still provides benefits in terms of speed of the overall computation, taking care of orchestrating the ML inference with the rest of the numerical calculations and supporting an efficient data exchange between the simulation engine and the inference model. The interface will help the users ensure that the performance of their code does not decrease due to the integration of ML tools.

The ML inference itself is very fast and so it may seem that the precise choice of the tool or framework might not have that big an impact on the overall performance. However, the number of tools and libraries providing various data-driven tools is growing fast and not all hardware platforms support all existing libraries, and not all versions are compatible with various software. It is becoming challenging to navigate all these tools, to identify the one most suitable for the job and to be able to use it. SiMLInt provides a general interface with default choice of tools that are tested to be general, performant and robust, so the users can get reliable functionality by simply making a SiMLInt call. At the same time the interface is designed to be extendable, so any new ML toolkit can be easily added to the ensemble and made available to the users.

SiMLInt aims to simplify the integration of the ML-based inference models with the simulation code, but an integral part of the project is to support the users in developing and training these models in the first place. The data-driven and machine learned inference is based on “fitting” the models to a set of pairs of inputs and desired outputs, the so-called “training data”. This data can be collected from the traditional numerical simulations and shaped to a format suitable for the model the user wishes to fit it to. There is a chance that the model may learn a different function from the training data than the one the user intended and introduce a systematic bias to the simulation, which is one of the main risks of the data-driven or machine learned approximations. Considering the high stakes, we will supplement the ML interface with a range of training materials and examples based on the high-priority use cases, which will show how to collect unbiased training data, fit a model and validate the results.

SiMLInt is being developed at the University of Edinburgh between EPCC and the School of Mathematics, but fundamentally it is a multi-disciplinary pursuit. We are keen to get feedback or hear any ideas that might help us improve SiMLInt or its usability!

—

Contact:

Project Team:

or

Amy Krause (lead):

Anna Roubickova (knowledge exchange coordinator):

—